New Manual Post sounds simple, but in practice it sits at the intersection of control, repeatability, and operational efficiency. For developers and efficiency-focused users, that combination matters. Automated systems are fast, but they are not always appropriate. A manual post workflow provides deterministic input, explicit review, and a narrower risk surface when precision matters more than throughput.

Its real value is that it introduces intentional execution into an otherwise automated environment, which can improve quality, reduce accidental changes, and make sensitive publishing steps easier to audit. When teams need reliable checkpoints, manual posting becomes less of a fallback and more of a deliberate system design choice.

What is New Manual Post?

A New Manual Post refers to the creation and submission of a new entry, update, record, or publication through direct human action rather than through a scheduled job, API-triggered workflow, or automation pipeline. The exact implementation varies by platform, but the underlying pattern remains consistent. A user opens an interface, inputs content or data, applies required metadata, performs validation, and then publishes or saves.

In technical environments, this can describe several distinct actions. It may refer to publishing a blog post in a CMS without a content automation pipeline. It may describe creating a record in an internal admin dashboard. It may also refer to manually posting updates to a knowledge base, support portal, moderation queue, or deployment log. The term is broad, but the operational meaning is stable: a new item is created through manual intervention.

That distinction matters because manual creation changes the system’s behavior. Automated posts optimize for scale and consistency. Manual posts optimize for judgment and contextual awareness. A human can evaluate edge cases, account for timing, catch formatting anomalies, and recognize whether a post should exist at all. In environments where errors are expensive, that judgment layer is often worth the added time.

Why the concept matters in modern workflows

Many teams assume that efficiency means full automation. In reality, efficient systems are usually hybrid systems. They automate repetitive, low-risk steps and preserve manual control for critical decisions. A New Manual Post fits neatly into that model because it can function as a controlled insertion point inside a larger workflow.

For example, a development team might automate draft generation, metadata suggestions, and validation checks, then require a human to manually create or approve the final post. That approach keeps productivity high while reducing the chance of publishing incorrect or incomplete information. The manual step is not inefficiency. It is a control boundary.

This is especially useful where content, status updates, or records affect users directly. A mistaken product announcement, a malformed release note, or an incorrectly tagged documentation update can create downstream confusion that costs more than the time saved through automation. Manual posting introduces friction, but often the right kind of friction.

Key Aspects of New Manual Post

A New Manual Post workflow is defined by a few core characteristics: human initiation, explicit field entry, context-sensitive review, and direct publication control. These characteristics seem basic, but together they create a workflow pattern with distinct strengths and weaknesses.

Human initiation is the first defining factor. Nothing happens until a person decides to create the post. That means the act itself is intentional, and that intentionality changes quality outcomes. Teams can align a post with current business conditions, product changes, or internal approvals without needing to redesign automation rules every time a new edge case appears.

Explicit field entry is the second aspect. In a manual process, titles, tags, descriptions, attachments, references, and publishing settings are often entered or verified one by one. This slows things down slightly, but it also surfaces mistakes that automation can hide. A user noticing a missing category or malformed summary before publication is a common and valuable failure-prevention mechanism.

Control and accuracy

The strongest argument for New Manual Post is control. Manual workflows allow contributors to see exactly what is being submitted and in what state. This is particularly relevant for technical documentation, compliance updates, product notices, and any system where publication creates a durable record.

Accuracy benefits from that visibility. A person reviewing a post can catch semantic issues that validation rules might miss. An automated system may confirm that a field is filled in, but it cannot always determine whether the content is misleading, outdated, or contextually inappropriate. Manual posting adds a layer of editorial or operational sense-checking that is difficult to encode in software.

That is why many organizations preserve manual post paths even when they have mature automation stacks. They do not keep them because the automation is weak. They keep them because not every publishing decision can be reduced to rules.

Speed versus reliability

Manual posting is slower than automated posting, and that trade-off is real. If a team must publish thousands of low-risk records per hour, manual entry is the wrong mechanism. But where reliability is more important than raw throughput, the slower process often produces better outcomes.

This trade-off resembles the difference between batch processing and supervised release management. Batch systems are excellent for volume. Supervised systems are better for exceptions, approvals, and sensitive outputs. A New Manual Post belongs to the second category. It works best when each post carries enough importance to justify direct attention.

The practical question is not whether manual posting is slower. It is whether the cost of a bad post exceeds the cost of a slower one. In many cases, particularly in technical or customer-facing contexts, the answer is yes.

Traceability and governance

Another key aspect is governance. Manual workflows are easier to pair with role-based access, approval checkpoints, and audit trails. When a post is created manually, the responsible user, timestamp, revision state, and publishing action can be recorded with clarity. That is useful for internal accountability and often essential for regulated environments.

This is also where platform design matters. A weak manual posting interface can make users inconsistent and error-prone. A strong one supports predictable input, visible status indicators, and structured validation. Tools such as Home can improve this layer by centralizing manual workflows in a cleaner operational environment, reducing friction without removing control.

When manual posting is the better choice

There is no universal rule, but certain conditions strongly favor a New Manual Post workflow. It is usually the better option when content is high-impact, when approval context changes frequently, or when the source data is too variable for safe automation.

The table below summarizes the practical difference between manual and automated posting models.

| Factor | New Manual Post | Automated Post |

|---|---|---|

| Initiation | Human-triggered | System-triggered |

| Speed | Lower | Higher |

| Context awareness | Strong | Limited to programmed logic |

| Error prevention | Better for semantic and judgment-based issues | Better for repetitive structural consistency |

| Scalability | Limited by human capacity | High |

| Audit clarity | Often stronger at action level | Strong if logging is well implemented |

| Best use case | Sensitive, high-value, exception-based publishing | High-volume, repeatable, low-risk tasks |

How to Get Started with New Manual Post

Getting started with New Manual Post begins with clarifying what kind of post is being created, who is responsible for it, and what conditions must be satisfied before publication. Many manual workflows fail because they are treated as informal tasks. A reliable manual post process should still be structured, even if it is not automated.

The first step is to define the object model. A post may be content, a release note, a support update, a knowledge entry, or an internal record. Once that is clear, the required fields become easier to standardize. Standardization is important because it reduces variation without removing human control. The goal is not to script the post completely, but to ensure that every manually created item meets a minimum quality threshold.

A practical manual posting setup usually requires:

- A defined template, including mandatory fields and preferred formatting.

- A responsible owner, who creates or approves the post.

- A review rule, even if it is lightweight.

- A destination system, such as a CMS, internal admin dashboard, or unified workspace like Home.

Establish a repeatable workflow

A manual process becomes efficient only when it is repeatable. That means contributors should know where to start, what sequence to follow, and what validation to perform before publishing. Without that structure, manual posting becomes inconsistent and difficult to scale even at a small team level.

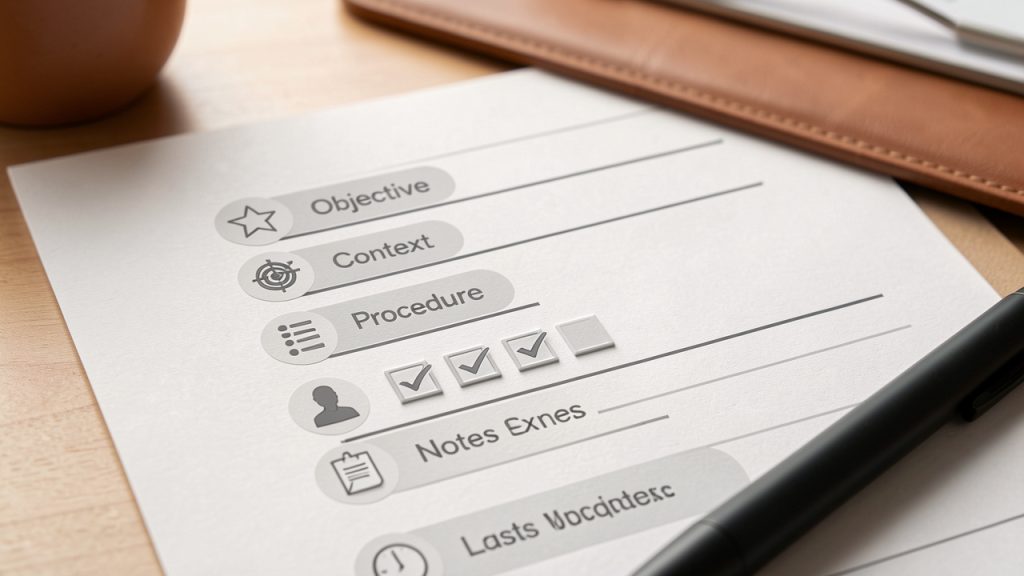

A good starting workflow often follows a simple sequence. The contributor creates the post, completes required fields, reviews formatting and metadata, verifies timing and destination, and then publishes. If approval is required, the publication step is replaced with a handoff state. Making each stage explicit reduces ambiguity and cuts down on avoidable errors.

The system interface matters here. If users need to switch between multiple tabs, documents, and dashboards just to create one post, manual work becomes unnecessarily expensive. Consolidated environments are more effective because they reduce context switching. That is one reason platforms like Home are valuable. They support efficiency not by forcing automation everywhere, but by making controlled manual actions faster and cleaner.

Define validation before publication

The most common weakness in a New Manual Post process is the absence of clear validation. People assume manual means self-explanatory. It rarely does. Even experienced users benefit from a short, consistent verification pass before final submission.

Validation should focus on correctness, completeness, and destination integrity. Correctness means the content itself is accurate. Completeness means required fields, tags, references, and attachments are present. Destination integrity means the post is going to the right place, under the right visibility, at the right time. A manual post can be well written and still fail operationally if it is published in the wrong environment.

Teams with frequent manual posting tasks often benefit from a lightweight checklist embedded directly in the interface. This is more effective than storing process documentation in a separate location that users forget to consult. The best validation is visible at the moment of action.

Reduce friction without removing oversight

The phrase “manual process” often suggests inefficiency, but that is usually a design problem rather than an inherent limitation. Manual posting becomes painful when interfaces are cluttered, field requirements are unclear, and users lack reusable patterns. Improve those three areas, and the process becomes much more efficient.

Templates are the first lever. They allow users to start from a known-good structure rather than a blank screen. Sensible defaults are the second lever. If a category, visibility level, or status is usually the same, the system should prepopulate it while still allowing edits. Contextual prompts are the third lever. They remind users what matters at the point of execution rather than burying guidance in documentation.

The objective is not to eliminate the manual step at all costs. The objective is to remove unnecessary effort while preserving human review where it creates value.

Practical implementation considerations

For developers, the term New Manual Post often raises an implementation question: how should a system support manual creation in a technically sound way? The answer usually involves interface design, permissions, auditability, and state management rather than complex algorithms.

A well-designed manual post system should clearly separate draft, review, and published states. It should also maintain revision history and identify the actor responsible for each transition. This makes the workflow legible and helps teams debug process failures. If a bad post goes live, the question should not be “what happened?” but “which transition failed and why?”

Permissions are equally important. Not every user who can draft should be able to publish. Not every user who can publish should be able to edit historical records. Manual systems become safer when these responsibilities are explicit. That applies whether the posting environment is a custom internal tool or a packaged platform.

Manual posting in hybrid systems

The most effective real-world architecture often combines manual and automated components. For instance, metadata might be suggested automatically, formatting may be validated by the system, and notification delivery can occur after publication without human involvement. The actual creation and release of the post, however, remains manual.

This hybrid model gives teams the best of both approaches. Automation handles repetitive mechanics. People handle judgment, timing, and exception management. New Manual Post is therefore not the opposite of automation. It is often the human checkpoint inside an automated ecosystem.

That framing is useful because it prevents false choices. Teams do not need to decide between full manual control and full automation. They can design for both, assigning each part of the workflow to the mechanism that handles it best.

Conclusion

New Manual Post is more than a basic publish action. It is a workflow pattern built around control, accuracy, and accountable execution. For developers and efficiency-minded teams, its relevance comes from the fact that not every task should be automated, especially when a post carries operational, customer-facing, or compliance risk.

The next step is to evaluate where manual posting currently exists in the workflow, where it should exist, and where it creates unnecessary friction. If the process is critical, formalize it. If the interface is messy, simplify it. If the team is juggling too many tools, consider a centralized environment such as Home to make manual posting faster without sacrificing oversight.